Analyzing your test results

Congratulations, you have your test results…now what? In this article, we will walk you through how to begin diagnosing and addressing any errors that are flagged in your Waldo test results.

Analyzing results for YOUR testsWhile this article will offer general guidance, many potential errors will be app-specific. The below information is a general guide to effective triage and investigation…not a one size fits all, step by step approach. Never hesitate to reach out to our team for personalized guidance!

How do I know something went wrong?

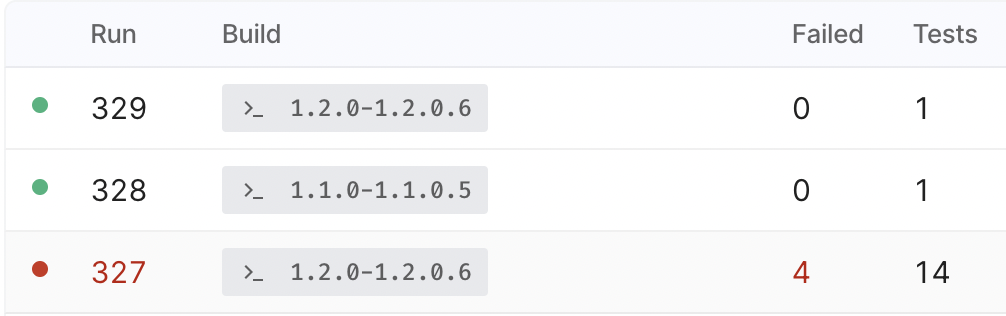

When looking at your test results, it will be obvious pretty quickly if there was an issue detected. For starters, you will be able to see on your runs page what passed, and what failed.

This is indicated by a red or green dot alongside the run number, as pictured below.

Tracking the health of your test runsIf you go to your Tests page and select a test from your suite, you will also be able to see the status of your latest test AND follow the health of that particular test over time.

A Red, Orange, or Green square at the bottom of your Results Studio indicates the health of each test run sequentially. For more on how to navigate the Results Studio, view the Results Studio Overview.

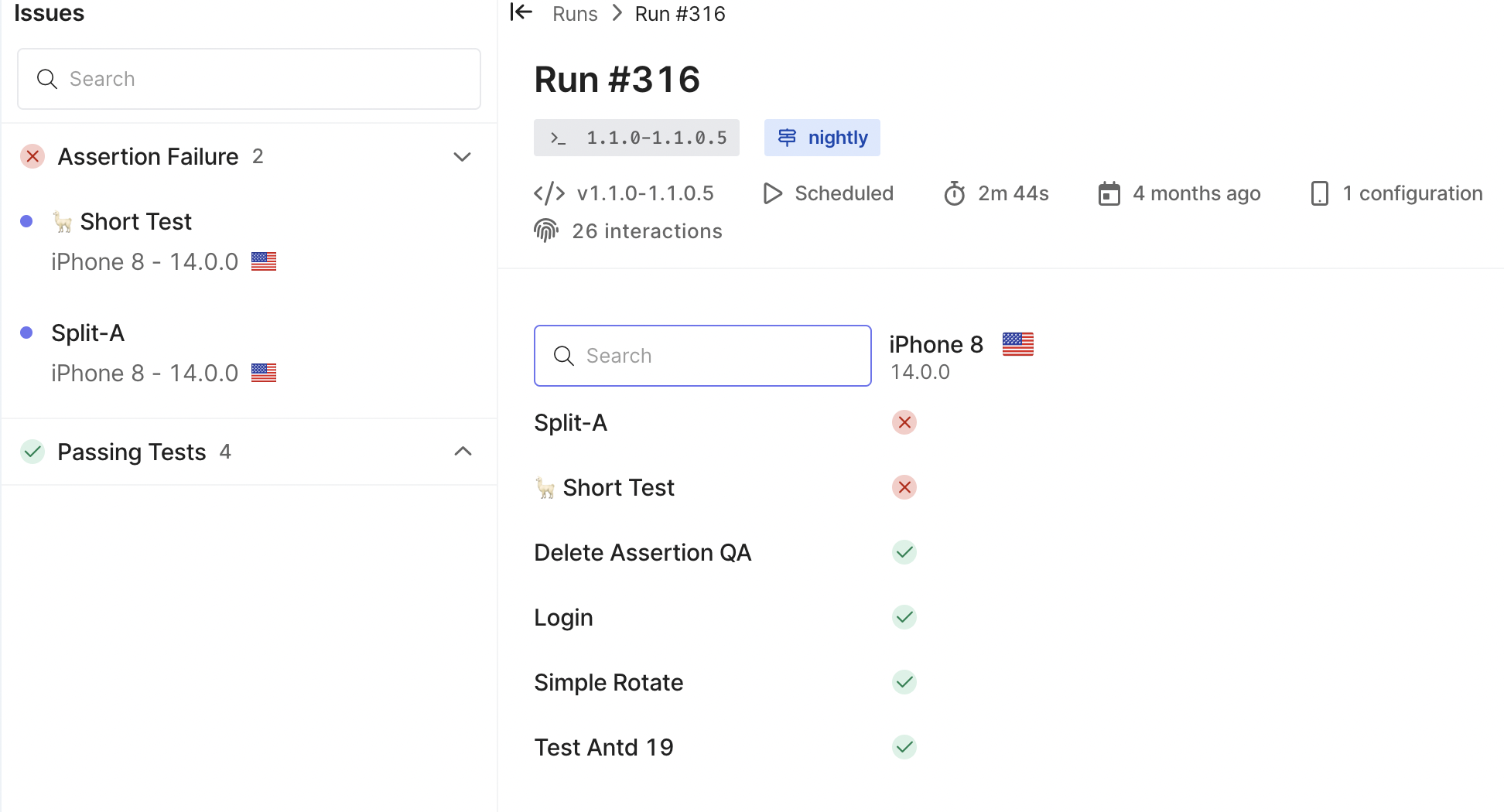

Clicking into the failed run, you will be able to see which specific tests failed, or begin diagnosing what happened in a single, isolated test.

Click on the failed test you wish to investigate.

To begin troubleshooting any errors you see in Waldo, and reproducing the issue:

-

Make sure this test doesn’t fail because of a dependency.

a. Open up the dependency inspector in Results Studio to ensure a dependent test did not result in the failure of the test you are examining. -

Look at the last step in the failed run to see if you can visually identify what went wrong.

a. This seems obvious, but being able to visually review what Waldo encountered while trying to run the test can help identify errors quickly with no additional work.

b. Open the step by step comparison view in your Results Studio to determine what occurred in the failing step compared to your testing baseline. -

Click on “Review” to view additional details on the step failure

a. This will highlight interaction failures and assertion failures that may have occurred. -

Download and inspect your logs, as well as any available crash report to better understand the issues that caused a failure.

a. It includes only entries directly generated by your app, beginning at app launch.

b. This means that if your test is dependent on other tests, the log includes entries for those dependent tests as well. -

If you need additional confirmation, download your build and launch it in your local simulator to see if you can reproduce the issue.

a. This can confirm where the issue is: with your build or with Waldo

b. You can launch the build in Sessions and see if it is working as expected or launch on your local machine using a different simulator/emulator

How do I prioritize my results?

Below, we will walk through the different errors you may see in Waldo, what they mean, and how serious they generally are.

Assertions failed (low-medium severity)

These are independent from whether the functional test passed (going from A to B) - unless the failed assertion is directly related to an interaction failure (unable to move from point A to point B).

- Start by visually examining the failed step and identifying the failed assertion

Adjusting assertions to accommodate wait times in your appSometimes, Waldo does no wait long enough for a screen to load before trying to click on an element or perform and interaction.

This is likely because the wait time required was not covered by your assertions or there is a time out issue. For more information on addressing this issue, review this article.

Fixing assertionsIf an assertion fails for a known and/or accepted reason, you can simply Click into the failed step, select “Fix Assertion” and save your changes.

- If the failure was cause by a UI bug, congrats! Waldo worked and you can alert your team.

Could not perform interaction (medium-high severity)

This means that Waldo was not able to perform the interaction leading the the next screen in the flow.

- This can happen when the button is not found, the UI/button has changed, the button has been removed, or the property of the button has changed.

If your UI has changed (intentionally), and it is causing your test to fail...This can be addressed by updating the test to reflect the changes in the baseline recording.

- In rare cases, this can also happen if Waldo clicked on the wrong button, which can be fixed by editing that interaction point.

Flaky Tests (medium-high severity)

A “flaky” test is one that yields different outcomes when played multiple times. In order to detect flakiness, Waldo will always retry an action whenever we see any kind of error when replaying a test:

- if the retry has the exact same result, we can categorize the test as either "crash", "error" or "cannot replay".

- if the retry does NOT have the same result, we categorize the test as flaky.

There are two leading causes for flakiness:

- Your app is flaky.

- Waldo needs to be given better instructions to ensure interactions are attempted at the right time.

Example: wait time causing flakiness*Imagine that your app allows the user to upload a photo, displays the photo as it is uploading, and (once the upload is complete) allows the user to tap on the photo to navigate to another screen.

During recording, everything works correctly. Upon replay however, if the timing is off, the tap occurs too soon on the photo and nothing can happen because the upload is not finished*.

One solution to this problem is to add an assertion on any element that will only appear after uploading completes (perhaps a checked checkbox, or some informative text). See Assertions to learn more.

Another solution is to make Waldo wait longer before attempting the interaction. This really should only need to occur if (in the example above) it would take more than 30 seconds to complete the upload of the photo. To force Waldo to wait longer on that particular screen, you can simply update the time limit (See Time Limit for more details).

In summary, key actions to address flakiness include:

- removing an assertion when it is testing something not deterministic

- add an assertion to require additional conditions that must be met before Waldo attempts an interaction.

- adjust the time limit in the event an assertion only failed because Waldo did not wait long enough to attempt an interaction

Dependency errors (high severity)

This means a parent test failed and prevents dependent tests from running.

- When Waldo detects a dependency error, it will show you the parent test that failed

- Fixing that parent test will allow the dependent test to run

Fixing parent testsIf the dependency failure is caused by a failed parent test, fixing the parent test will often resolve the issue causing the dependent test to fail.

App crashed (high severity)

This means the build is faulty and error in the code needs to be fixed.

- You can download the app logs to track when the issue began to occur

- You can download the crash reports to investigate and diagnose the cause of the crash

Updated 3 months ago